This post is also available in:

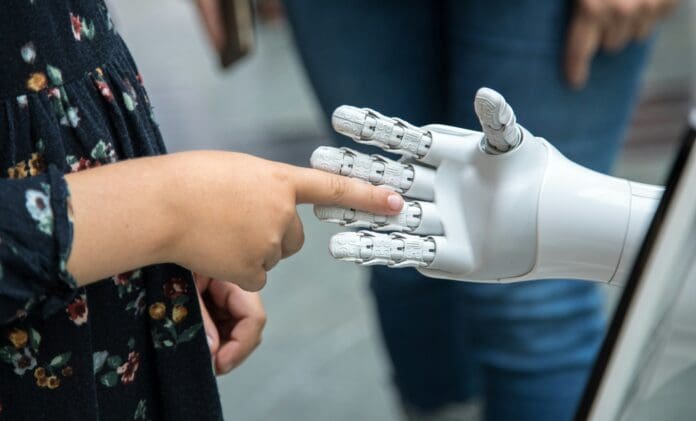

A new wearable system developed by engineers at the University of California San Diego is redefining how humans can interact with machines. The device allows users to control robots through simple arm gestures — even while running, shaking, or submerged in rough water — overcoming one of the main barriers to practical, real-world gesture control.

Gesture-based interfaces have long promised a hands-free way to operate robots, medical devices, or tools. However, they tend to fail once the user starts moving. Background motion, known as “motion noise,” makes it difficult for sensors to distinguish between intended gestures and unintentional movement. The new system tackles that problem head-on by combining soft, stretchable sensors with a deep-learning model that filters out motion interference in real time.

Attached to an elastic armband, the thin electronic patch integrates motion and muscle sensors, a Bluetooth microcontroller, and a flexible battery. It continuously gathers data from the wearer’s arm and uses artificial intelligence to interpret gestures instantly, even under heavy vibration or turbulence. In tests, the system maintained precise control of a robotic arm during running, vehicle movement, and simulated ocean conditions — situations that would typically cause signal breakdown.

According to Interesting Engineering, this advance could reshape how people interact with technology in demanding or unpredictable environments. In healthcare, patients with limited mobility could direct robotic aids through broad, natural movements rather than small, difficult motions. In industrial or emergency settings, workers could control tools or drones hands-free while maintaining focus on their surroundings.

The system also holds potential for defense and security use. Soldiers, divers, or field operators could silently issue commands to unmanned systems or robots through gesture alone, maintaining communication even in noise-heavy, underwater, or high-motion scenarios where voice or traditional controls are impractical.

By merging soft electronics with adaptive AI, the research offers a path toward intuitive, reliable human–machine interfaces built for real life — not just the lab. The study marks a significant step toward wearable technology that truly keeps pace with the human body in motion.

The research was published here.