This post is also available in:

עברית (Hebrew)

עברית (Hebrew)

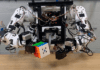

Cornell University researchers have developed a silent-speech recognition interface that uses acoustic-sensing and artificial intelligence to continuously recognize up to 31 unvocalized commands, based on lip and mouth movements.

The low-power, wearable interface—called EchoSpeech—requires just a few minutes of user training data before it will recognize commands and can be run on a smartphone.

Ruidong Zhang, doctoral student of information science, is the lead author of “EchoSpeech: Continuous Silent Speech Recognition on Minimally obtrusive Eyewear Powered by Acoustic Sensing,” which will be presented at the Association for Computing Machinery Conference on Human Factors in Computing Systems (CHI) this month in Hamburg, Germany.

“For people who cannot vocalize sound, this silent speech technology could be an excellent input for a voice synthesizer. It could give patients their voices back,” Zhang said of the technology’s potential use with further development.

In its present form, EchoSpeech could be used to communicate with others via smartphone in places where speech is inconvenient or inappropriate, like a noisy restaurant or quiet library.

As reported by techxplore.com.