Robots To Soon Touch The World Around Them

This post is also available in:  עברית (Hebrew)

עברית (Hebrew)

Depending on how you count them, humans have a variety of senses. The big five – sight, smell, hearing, taste, and touch – provide us with the vast majority of the information we require to operate in the world. In some applications, for robots to be most useful for us, they need to possess replicas of these senses. Indeed, several of the senses are already recreated in their machine versions, or work is well underway to make it a reality. Video analytics provides machine visions. Audio analysis – including speech recognition – is a well developed field. There is even work to imbue machines with a “sense of smell.” Soon, robots could be getting the sense of touch, as well.

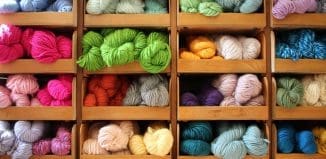

SynTouch is developing a haptic sensor that aims to provide robots with the ability to “feel” textures. They have, using this sensor, compiled a taxonomy of more than 500 materials, from fabrics to stone, in a database called SynTouch Standard. It’s based on 15 factors, like thermal properties, friction, and coarseness. SynTouch intends to standardise the classification of how materials feel, and to take subjectivity out of the matter.

SynTouch came into existence out of University of Southern California’s Medical Device Development Facility. The team there focused their research on prosthetics, and one of their most important insights was that by touching something, you are also changing it. Your fingers exude heat, and no matter how gentle you are, you apply pressure to the surface you’re touching. By tactile observation, you are changing the material, and are in fact observing the changes you yourself introduce. SynTouch’s sensors tries to emulate that by emitting heat and exerting pressure in a way similar to human fingers.

Soon, robots could not only do what humans can, but also “feel” the world like we can, or at least touch the world in the way we humans do.